Understanding Google Gemini's Watermark Policy

Know what the watermark actually is

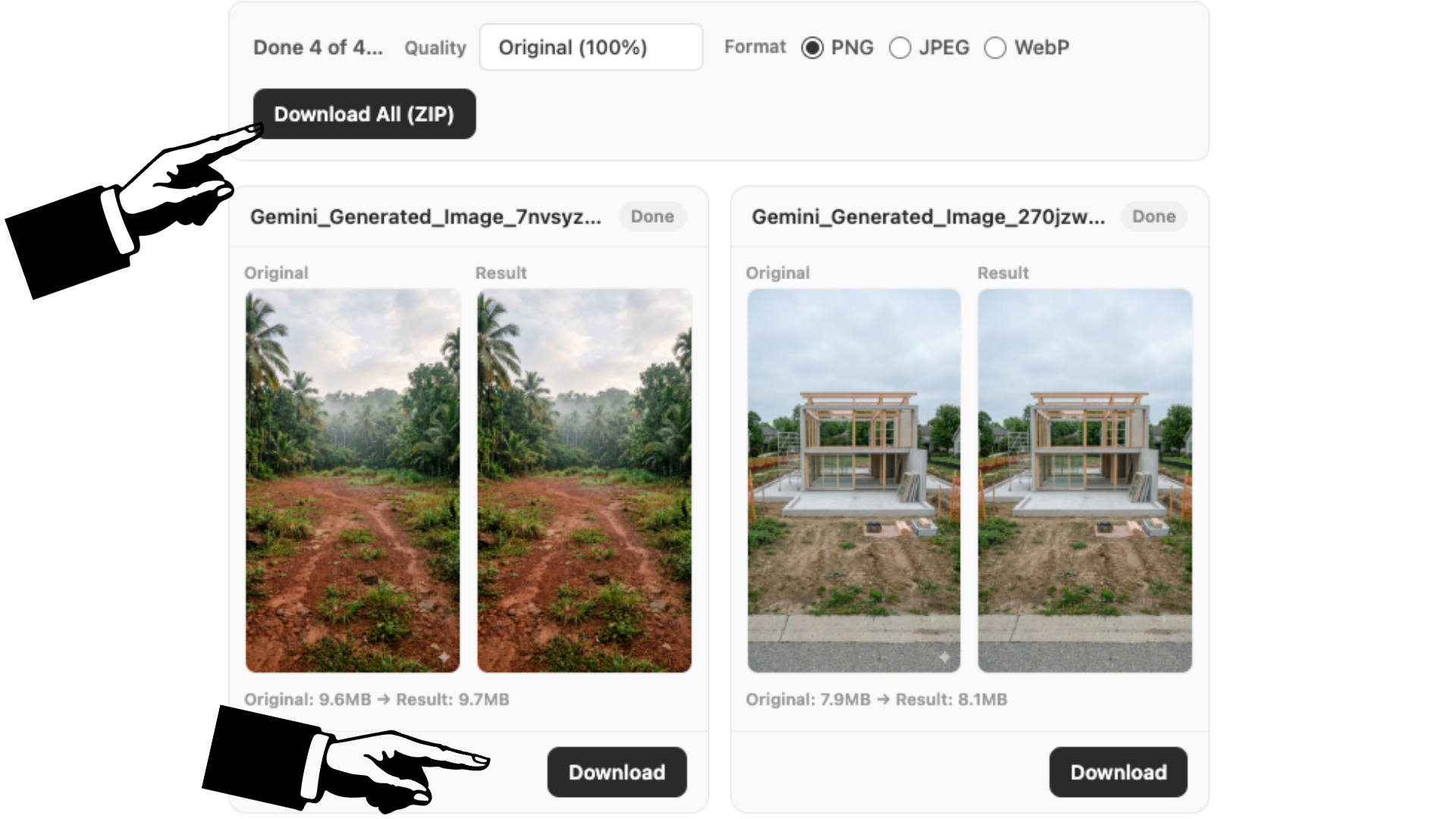

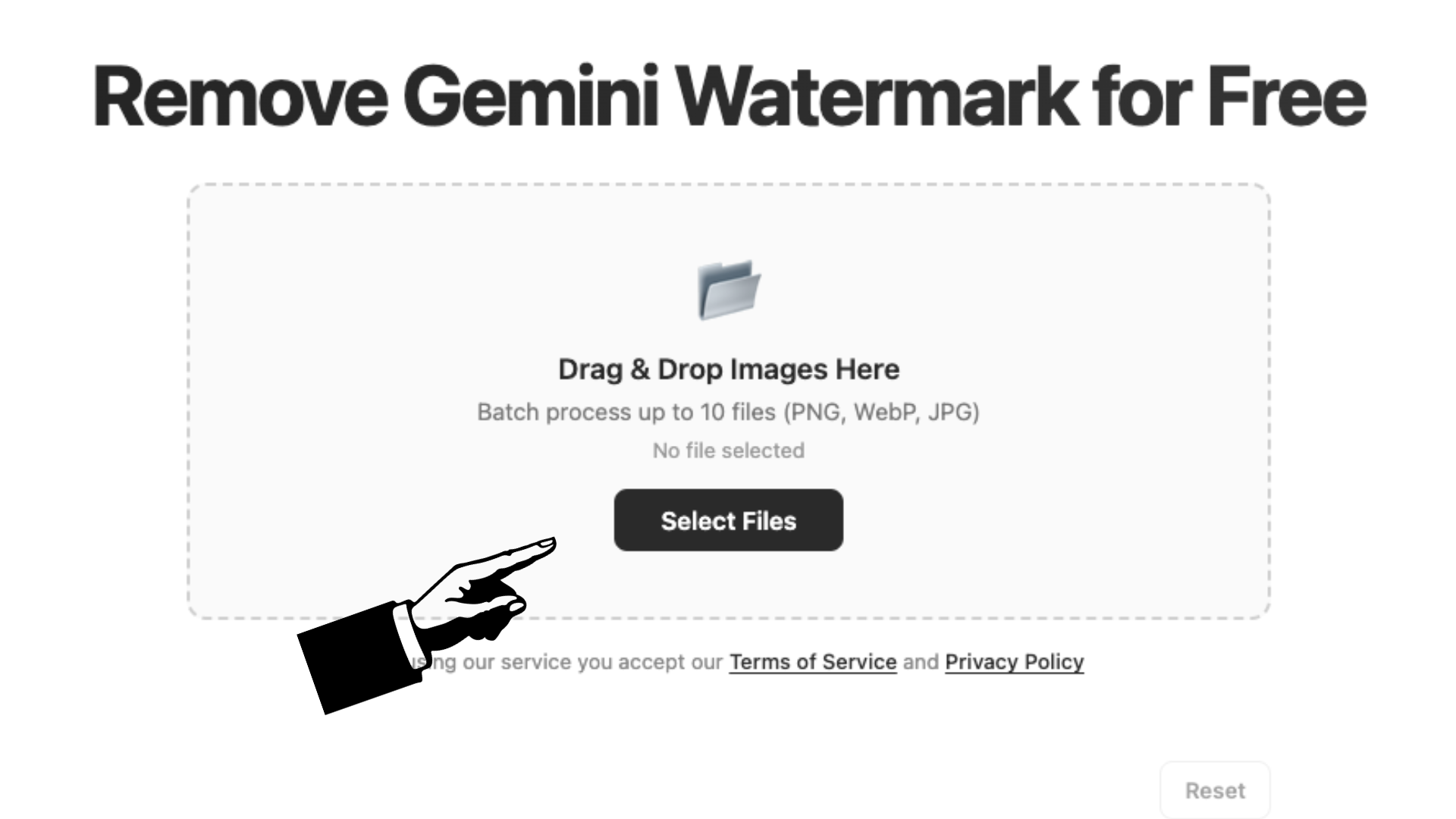

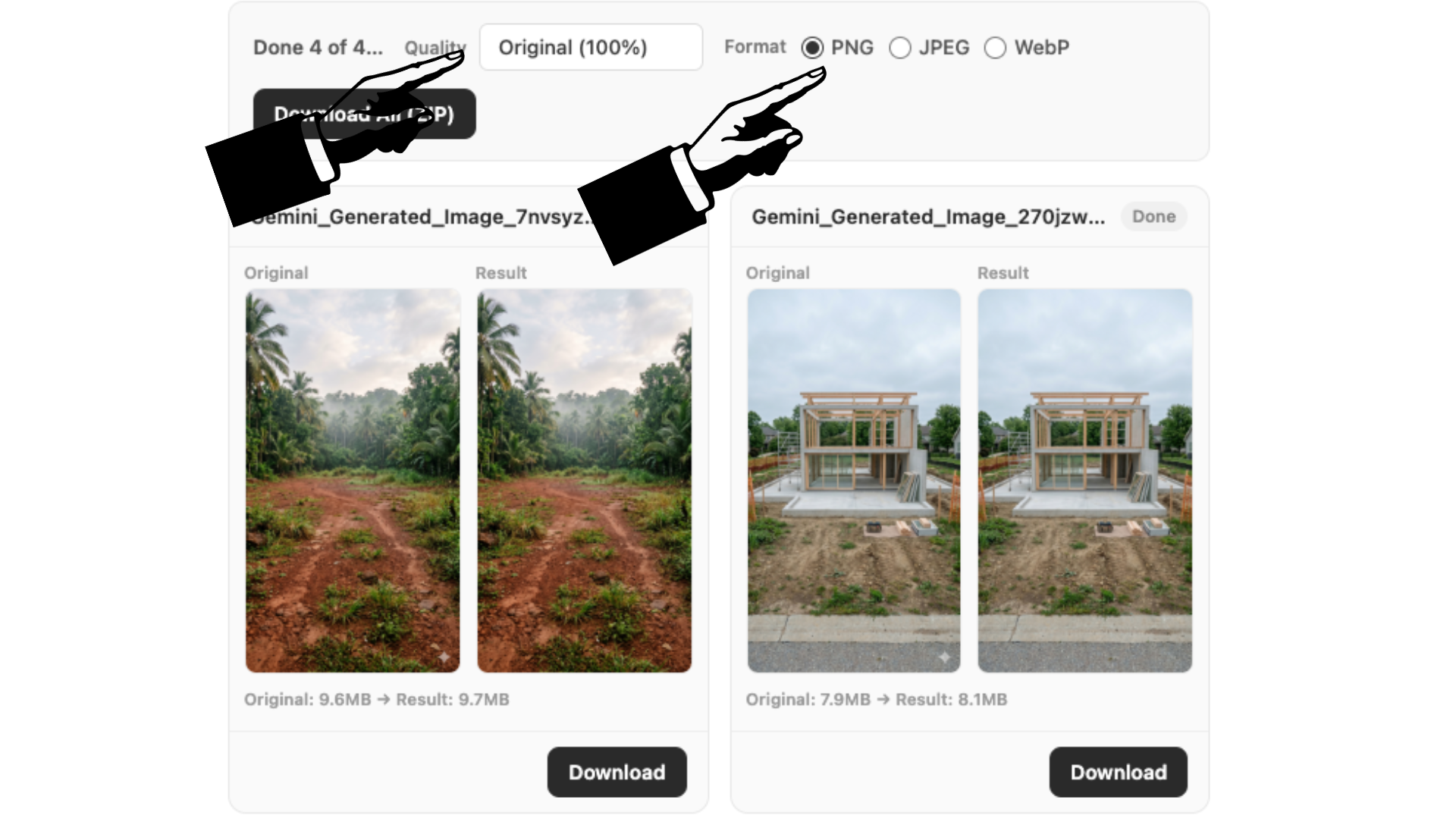

Before diving into legal questions, it helps to understand what you're dealing with. Gemini adds two types of watermarks to every image it generates: a visible semi-transparent logo in the bottom-right corner, and an invisible digital fingerprint called SynthID. The visible one is what most people notice and want to remove—it's essentially Google's way of saying "this was made by AI." The invisible one is embedded deep in the image data and survives edits, screenshots, and format conversions. Our tool only deals with the visible logo.

Google's stance on watermark removal

Google hasn't published a specific policy document that says "thou shalt not remove the Gemini watermark." Their terms of service focus more on not misusing the AI tool itself—things like generating harmful content, violating copyright, or impersonating others. That said, Google clearly added the watermark for a reason: transparency. If you're removing it to deliberately deceive people—for instance, passing off AI art as your own handmade work or creating misleading images—you're moving into ethically questionable territory regardless of what the fine print says.

Make an informed decision

At the end of the day, whether you choose to remove the watermark comes down to context. Are you cleaning up a personal photo for a family slideshow? That's worlds apart from stripping watermarks off images to sell as original artwork. Most people using our tool fall somewhere in the middle—designers incorporating AI visuals into client work, content creators polishing images for social media, or professionals preparing presentation materials. Whatever your use case, being upfront about AI involvement when it matters is the best policy. The tool gives you the clean image; being honest with your audience is on you.